Neural Flow 3202560223 Apex Node

The Neural Flow 3202560223 Apex Node encapsulates selective flow control within intermediate neural layers. It aims to preserve gradient stability while enabling modular composition and reduced off-device communication. The approach emphasizes data locality and determinism to support on-device inference. Its architecture promises clearer neural flow abstractions and scalable deployment. Yet questions remain about trade-offs in latency, resource usage, and integration with existing models, inviting careful assessment of its practical impact.

What the Neural Flow 3202560223 Apex Node Is and Why It Matters

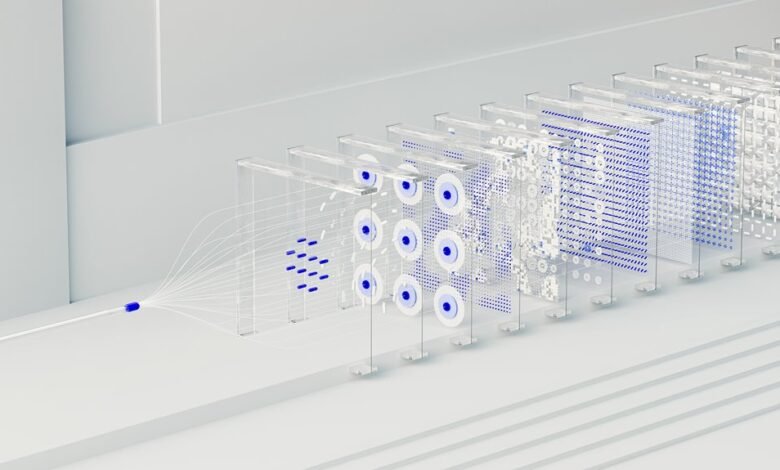

The Neural Flow 3202560223 Apex Node represents a specialized building block within contemporary neural network architectures, designed to streamline data processing and decision routing at intermediate layers.

It enables modular composition by isolating flow decisions and preserving gradient stability.

This situates neural flow topics within practical pipelines, supporting robust device inference while maintaining abstraction boundaries and analytical clarity for freedom-seeking researchers.

How the Apex Node Architecture Drives On-Device Inference

Apex Node architecture enables streamlined on-device inference by embedding selective flow control directly within model pathways, thereby reducing off-device communication and latency. The design emphasizes determinism in execution paths, aligning compute with available resources.

Edge inference performance hinges on memory bandwidth management, ensuring data locality. Rigorous scheduling and partitioning minimize stalls, enabling predictable throughput without sacrificing flexibility or scalability.

Real-World Use Cases: Mobile Apps, Robotics, and IoT

Mobile platforms, robotic systems, and IoT devices increasingly rely on edge inference to reduce latency and preserve bandwidth; assessing real-world deployments requires uniform evaluation criteria across modalities.

The discussion examines edge cloud interplay and model compression as central levers, enabling scalable, portable workflows.

Rigorous methodologies reveal deployment nuances, interoperability challenges, and optimization opportunities for constrained environments without compromising reliability or safety.

Performance Benchmarks and Trade-Offs to Consider

Assessing performance benchmarks and trade-offs requires a disciplined framework that juxtaposes latency, throughput, energy consumption, and accuracy across edge, cloud, and hybrid deployments. This analysis remains objective, detailing trade offs engineering considerations, on device inference throughput, and energy efficiency. It compares measurements, highlights variability, and clarifies implications for freedom-loving teams pursuing resilient, scalable, and transparent systems.

Conclusion

The Neural Flow 3202560223 Apex Node represents a disciplined step toward predictable, on-device reasoning. By coupling modular flow control with gradient-stable paths, it delineates clear compute boundaries while preserving training dynamics. Its disciplined architecture promises reduced latency and enhanced data locality, yet invites scrutiny of edge-case behaviors and resource trade-offs. As architectures grow more autonomous at the edge, the Apex Node offers a compelling, suspenseful conduit—an understated hinge between theory and tangible, resource-aware inference that remains to be fully explored.